Expected Improvement#

This section describes one of the widely used methods for performing balanced exploration and exploitation. Specifically, expected improvement (EI) is used as the acquisition function. The auxiliary optimization problem is written as:

where EI is written as

where \(\Phi\) and \(\phi\) are cumulative distribution and probability density function of the standard normal distribution, respectively. The \(f^*\) is the current best value, and \(\mu(\mathbf{x})\) and \(\sigma(\mathbf{x})\) are model prediction and uncertainty in prediction (standard deviation) from surrogate model, respectively. The \(\textrm{Z}\) is a standard normal variable that is computed as

Note that \(\hat{f}\) follows a normal distribution since it is modeled using a GP. The EI is a measure of the expected improvement of surrogate model at \(x\) over the best observed value. The first term in EI represents exploitation and the second term represents exploration. Note that the acquisition function optimization is a maximization problem.

Below code imports required packages and defines modified branin function:

import numpy as np

import torch

from pyDOE3 import lhs

import matplotlib.pyplot as plt

from scipy.stats import norm as normal

from scimlstudio.models import SingleOutputGP

from scimlstudio.utils import Standardize, Normalize

from gpytorch.mlls import ExactMarginalLogLikelihood

from pymoo.core.problem import Problem

from pymoo.algorithms.soo.nonconvex.de import DE

from pymoo.optimize import minimize

from pymoo.config import Config

Config.warnings['not_compiled'] = False

# Defining the device and data types

args = {

"device": torch.device('cuda' if torch.cuda.is_available() else 'cpu'),

"dtype": torch.float64

}

def modified_branin(x: np.ndarray) -> np.ndarray:

"""

Function for computing modified branin function value at given input points

"""

x = np.atleast_2d(x)

x1 = x[:,0]

x2 = x[:,1]

a = 1.

b = 5.1 / (4.*np.pi**2)

c = 5. / np.pi

r = 6.

s = 10.

t = 1. / (8.*np.pi)

y = a * (x2 - b*x1**2 + c*x1 - r)**2 + s*(1-t)*np.cos(x1) + s + 5*x1

return np.expand_dims(y,-1)

lb = np.array([-5., 0.])

ub = np.array([10., 15.])

/home/pkoratik/miniconda3/envs/sm/lib/python3.12/site-packages/tqdm/auto.py:21: TqdmWarning: IProgress not found. Please update jupyter and ipywidgets. See https://ipywidgets.readthedocs.io/en/stable/user_install.html

from .autonotebook import tqdm as notebook_tqdm

Below code block defines pymoo problem class and initializes differential evolution algorithm:

class ExpectedImprovement(Problem):

def __init__(self, gp, lb: np.ndarray, ub: np.ndarray, fbest: float):

"""

Class for defining auxiliary optimization problem that uses

expected improvment as the acquisition function

"""

# initialize parent class

super().__init__(n_var=lb.shape[0], n_obj=1, n_constr=0, xl=lb, xu=ub)

# store variables

self.gp = gp

self.fbest = fbest

def _evaluate(self, x, out, *args, **kwargs):

# convert to torch tensor

x = torch.from_numpy(x).to(self.gp.x_train)

# get mean prediction and std in prediction

y_mean, y_std = self.gp.predict(x)

# numerator of Z

numerator = self.fbest - y_mean.numpy(force=True)

# denominator of Z

denominator = y_std.numpy(force=True)

# std variable

z = numerator / denominator

# compute ei

ei = numerator * normal.cdf(z) + denominator * normal.pdf(z)

out["F"] = - ei # negating since pymoo minimizes

# Optimization algorithm

algorithm = DE(pop_size=15*lb.shape[0], F=0.9, CR=0.8, seed=1)

BO loop#

Below code implements BO loop with EI-based acquisition function. Four initial samples are used with a maximum function evaluation budget of 12. This implies that there will be 8 iterations of the loop. The initial samples are generated using latin hypercube sampling. A Gaussian process model is used to approximate the modified Branin function since it provides both point-prediction and uncertainty in prediction.

# variables

num_init = 4

max_evals = 24

num_evals = 0

# initial training data

x_train = lhs(lb.shape[0], samples=num_init, criterion='cm', iterations=100, seed=1)

x_train = lb + (ub - lb) * x_train

y_train = modified_branin(x_train)

# increment evals

num_evals += num_init

idx_best = np.argmin(y_train)

fbest = [y_train[idx_best]]

xbest = [x_train[idx_best]]

print("Current best before loop:")

print("x: {}".format(xbest[-1]))

print("f: {}".format(fbest[-1]))

print("\nLCB Loop:")

# loop

while num_evals < max_evals:

print(f"\nIteration: {num_evals-num_init+1}")

# GP

gp = SingleOutputGP(

x_train=torch.from_numpy(x_train).to(**args),

y_train=torch.from_numpy(y_train).to(**args),

output_transform=Standardize,

input_transform=Normalize,

)

mll = ExactMarginalLogLikelihood(gp.likelihood, gp) # marginal log likelihood

optimizer = torch.optim.Adam(gp.parameters(), lr=0.01) # optimizer

# Training the model

gp.fit(training_iterations=1000, mll=mll, optimizer=optimizer)

# Find the minimum of surrogate model

result = minimize(ExpectedImprovement(gp, lb, ub, fbest[-1]), algorithm, verbose=False)

# Computing true function value at infill point

y_infill = modified_branin(result.X)

print("New point (based on EI):")

print("x: {}".format(result.X))

print("f: {}".format(y_infill.item()))

# Appending the the new point to the current data set

x_train = np.vstack(( x_train, result.X.reshape(1,-1) ))

y_train = np.vstack((y_train, y_infill))

# increment evals

num_evals += 1

# Find current best point

idx_best = np.argmin(y_train)

fbest.append(y_train[idx_best])

xbest.append(x_train[idx_best])

print("Current best:")

print("x: {}".format(xbest[-1]))

print("f: {}".format(fbest[-1]))

fbest = np.array(fbest)

xbest = np.array(xbest)

Current best before loop:

x: [-3.125 5.625]

f: [28.46841701]

LCB Loop:

Iteration: 1

New point (based on EI):

x: [-1.44474737 2.86820736]

f: 36.4828102110335

Current best:

x: [-3.125 5.625]

f: [28.46841701]

Iteration: 2

New point (based on EI):

x: [9.15050074e+00 1.13605816e-15]

f: 51.58693605710514

Current best:

x: [-3.125 5.625]

f: [28.46841701]

Iteration: 3

New point (based on EI):

x: [2.52016071 4.07720156]

f: 16.40087058902683

Current best:

x: [2.52016071 4.07720156]

f: [16.40087059]

Iteration: 4

New point (based on EI):

x: [7.84180119 3.64498127]

f: 54.08509173121499

Current best:

x: [2.52016071 4.07720156]

f: [16.40087059]

Iteration: 5

New point (based on EI):

x: [0.70576 5.2777565]

f: 20.95048999706556

Current best:

x: [2.52016071 4.07720156]

f: [16.40087059]

Iteration: 6

New point (based on EI):

x: [-5. 8.77251162]

f: 58.533427546649136

Current best:

x: [2.52016071 4.07720156]

f: [16.40087059]

Iteration: 7

New point (based on EI):

x: [1.62160246 3.95469854]

f: 17.658735556526207

Current best:

x: [2.52016071 4.07720156]

f: [16.40087059]

Iteration: 8

New point (based on EI):

x: [-5. 15.]

f: -7.49170048422188

Current best:

x: [-5. 15.]

f: [-7.49170048]

Iteration: 9

New point (based on EI):

x: [-4.10938247 15. ]

f: -15.915094867475982

Current best:

x: [-4.10938247 15. ]

f: [-15.91509487]

Iteration: 10

New point (based on EI):

x: [-3.63080849 15. ]

f: -14.324367878952765

Current best:

x: [-4.10938247 15. ]

f: [-15.91509487]

Iteration: 11

New point (based on EI):

x: [-4.01910093 14.05331837]

f: -16.047006839888095

Current best:

x: [-4.01910093 14.05331837]

f: [-16.04700684]

Iteration: 12

New point (based on EI):

x: [-4.03530581 14.51306039]

f: -16.1922996341998

Current best:

x: [-4.03530581 14.51306039]

f: [-16.19229963]

Iteration: 13

New point (based on EI):

x: [-2.96284418 11.48854598]

f: -14.133017439291134

Current best:

x: [-4.03530581 14.51306039]

f: [-16.19229963]

Iteration: 14

New point (based on EI):

x: [ 4.84396335 15. ]

f: 222.5733852112459

Current best:

x: [-4.03530581 14.51306039]

f: [-16.19229963]

Iteration: 15

New point (based on EI):

x: [10. 5.12283839]

f: 56.43703946550755

Current best:

x: [-4.03530581 14.51306039]

f: [-16.19229963]

Iteration: 16

New point (based on EI):

x: [10. 15.]

f: 195.87219087939556

Current best:

x: [-4.03530581 14.51306039]

f: [-16.19229963]

Iteration: 17

New point (based on EI):

x: [-3.68262418 13.62254088]

f: -16.64374924519494

Current best:

x: [-3.68262418 13.62254088]

f: [-16.64374925]

Iteration: 18

New point (based on EI):

x: [2.76384330e+00 1.83592494e-10]

f: 21.5919553856424

Current best:

x: [-3.68262418 13.62254088]

f: [-16.64374925]

Iteration: 19

New point (based on EI):

x: [1.00000000e+01 1.56571998e-13]

f: 60.96088903565051

Current best:

x: [-3.68262418 13.62254088]

f: [-16.64374925]

Iteration: 20

New point (based on EI):

x: [-3.68409005 13.61927722]

f: -16.64390454345944

Current best:

x: [-3.68409005 13.61927722]

f: [-16.64390454]

/home/pkoratik/miniconda3/envs/sm/lib/python3.12/site-packages/gpytorch/distributions/multivariate_normal.py:376: NumericalWarning: Negative variance values detected. This is likely due to numerical instabilities. Rounding negative variances up to 1e-10.

warnings.warn(

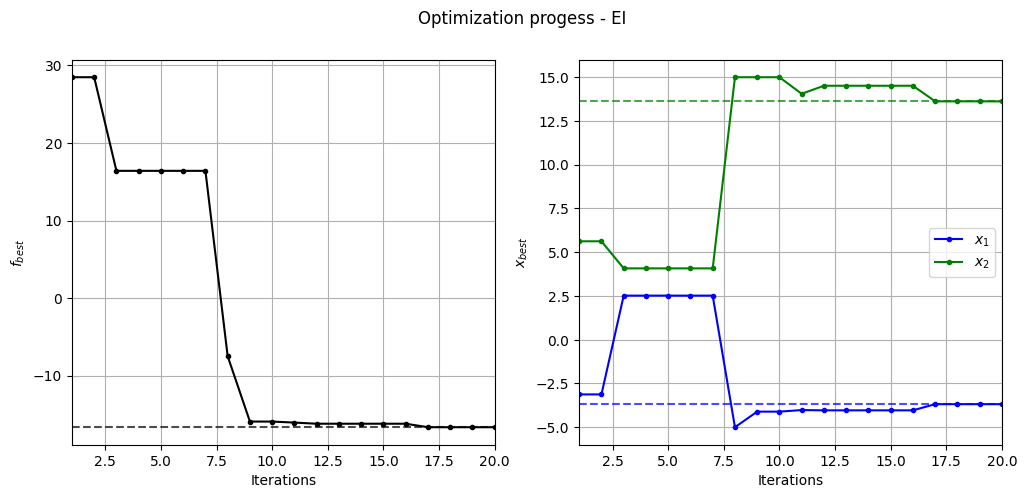

Below code plots the evolution of optimum point with respect to number of iterations:

fig, ax = plt.subplots(1, 2, figsize=(12, 5))

ax[0].plot(fbest, ".k-")

ax[0].set_xlabel("Iterations")

ax[0].set_ylabel("$f_{best}$")

ax[0].axhline(y=-16.64, c="k", linestyle="--", alpha=0.7)

ax[0].set_xlim(left=1, right=fbest.shape[0]-1)

ax[0].grid()

ax[1].plot(xbest[:,0], ".b-", label="$x_1$")

ax[1].plot(xbest[:,1], ".g-", label="$x_2$")

ax[1].axhline(y=-3.689, c="b", linestyle="--", alpha=0.7)

ax[1].axhline(y=13.630, c="g", linestyle="--", alpha=0.7)

ax[1].set_xlim(left=1, right=xbest.shape[0]-1)

ax[1].set_ylabel("$x_{best}$")

ax[1].set_xlabel("Iterations")

ax[1].legend()

ax[1].grid()

_ = plt.suptitle("Optimization progess - EI")

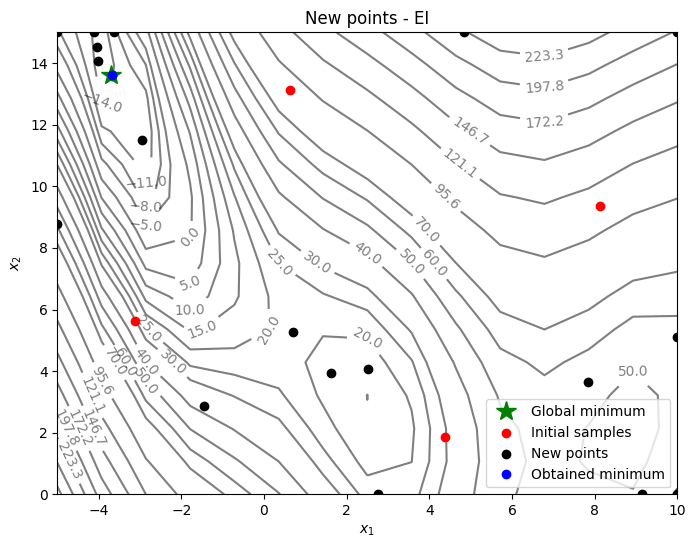

Below code plots the infill points added during the optimization process:

num_pts_per_dim = 15

x1 = np.linspace(lb[0], ub[0], num_pts_per_dim)

x2 = np.linspace(lb[1], ub[1], num_pts_per_dim)

X1, X2 = np.meshgrid(x1, x2)

x = np.hstack(( X1.reshape(-1,1), X2.reshape(-1,1) ))

Z = modified_branin(x).reshape(num_pts_per_dim, num_pts_per_dim)

# Level

levels = np.linspace(-17, -5, 5)

levels = np.concatenate((levels, np.linspace(0, 30, 7)))

levels = np.concatenate((levels, np.linspace(40, 60, 3)))

levels = np.concatenate((levels, np.linspace(70, 300, 10)))

fig, ax = plt.subplots(figsize=(8,6))

# Contours and global opt

CS=ax.contour(X1, X2, Z, levels=levels, colors='k', linestyles='solid', alpha=0.5, zorder=-10)

ax.clabel(CS, inline=1)

ax.plot(-3.689, 13.630, 'g*', markersize=15, label="Global minimum")

# Points

ax.scatter(x_train[0:num_init,0], x_train[0:num_init,1], c="red", label='Initial samples')

ax.scatter(x_train[num_init:,0], x_train[num_init:,1], c="black", label='New points')

ax.plot(xbest[-1][0], xbest[-1][1], 'bo', label="Obtained minimum")

# asthetics

ax.legend()

ax.set_xlabel("$x_1$")

ax.set_ylabel("$x_2$")

_ = ax.set_title("New points - EI")

From the above plots, it can be seen that EI finds the minimum of modified branin function while balancing exploration and exploitation. The EI adds some points in the unexplored regions before converging to global minimum.

NOTE: Due to randomness in differential evolution and GP training, results may vary slightly between runs. So, it is recommended to run the code multiple times to see average behavior.